As I wrote in my

last blog article I have been playing around with node.js and DB2 on IBM Bluemix. Now I am done with my first server-side JavaScript application which I named

"db2visitors".

When accessing the main page (index page), the app logs the visitor's IP address. Then a free geo location service is called to obtain extended information, such as country, region and city information of the visitor. This is how Google Analytics and other services know more about a website user, with the exception that many have their own database. That extended IP record is then inserted into a

DB2 table and the information displayed on a "DB2 got you" welcome page. To report back about from where the visitors to my demo application came, I created a second page that returns the aggregated country and region information for all visitors so far. How does it work? Let's look into the details.

First of all, I uploaded my sample code to

my Github "db2visitors" repository, so that you can get an impression of the directory structure and files. It is pretty simple and you will notice that I am new to

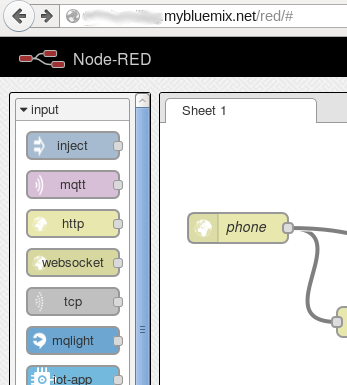

node.js. The application consists of a main file "app.js" that sets up the general infrastructure and defines the handling of so-called routes. A route basically determines what needs to be executed for a specific request to the Web server. When a user visits the main or index page, the node/Express framework would serve the request by calling my new function getIP(). That function is implemented in a file "db2access.js" in the "routes" directory. The current version as of now is shown below.

|

| SQLDB Control Center with Table DDL |

The function getIP obtains the visitor's IP address. Then it uses that information to provide it to an external IP address geo location service. That service returns a JSON structure with country, region, city and other data it has on file for the IP address or the related address block. Sometimes it nails it, (fortunately) in many cases only the country is correct. Anyway, it is a free service and you get an idea of Google and other advertising companies can do with your data. Once that extended record is available, we call into the function insertIP(). There, a DB2 connection is opened, a SQL statement prepared and executed to insert the IP address data into a relational table IP.VISITORS (schema shown on the right, it was created using the Bluemix SQLDB Control Center). Some form of basic IP logging. Last in the function getIP, a function render() is called with the parameter "index". It tells the rendering engine to process the HTML templates and page segments for the view "index". All the view-related files are in exactly that directory (

see my Github repository).

The last function in the file db2access.js is listCountries(). It demonstrates some basic reporting, i.e., how to issue a DB2 query from node.js using the ibm_db API, fetch the result and pass it to a template page. That page is displayed when going to the path "/visits" (as defined in app.js).

You can test it here. The query uses a simple GROUP BY to aggregate the country and region data.

That is all for today. Let me know if you have questions (comment, tweet or email) and read my other

database on Bluemix-related blog entries.

1: // Written by Henrik Loeser

2: // Some functions to retrieve extended information for a IP address,

3: // to insert that extended IP record into a DB2 table, and to

4: // report some basic statistics as part of a second HTML page.

5:

6:

7:

8: // Insert the JSON record with the IP information as SQL record into DB2.

9: exports.insertIP = function(ibmdb,connString,ipinfo) {

10: console.log("insertIP called",ipinfo);

11: ibmdb.open(connString, function(err, conn) {

12: if (err ) {

13: res.send("error occurred " + err.message);

14: }

15: else {

16: // prepare the SQL statement

17: conn.prepare("INSERT INTO IP.VISITORS(vtime,ip,country_code,country,region_code,region,city,zip,latitude,longitude,metro,area) VALUES (current timestamp,?,?,?,?,?,?,?,?,?,?,?)", function(err, stmt) {

18: if (err) {

19: //could not prepare for some reason

20: console.log(err);

21: return conn.closeSync();

22: }

23:

24: //Bind and Execute the statment asynchronously

25: stmt.execute([ipinfo["ip"],ipinfo["country_code"],ipinfo["country_name"],ipinfo["region_code"],ipinfo["region_name"],ipinfo["city"],ipinfo["zipcode"], ipinfo["latitude"], ipinfo["longitude"],ipinfo["metro_code"],ipinfo["area_code"]], function (err, result) {

26: console.log(err);

27: // Close the connection to the database

28: conn.close(function(){

29: console.log("Connection Closed");

30: });

31: });

32: });

33: }

34: })};

35:

36:

37: // Get the caller's IP address from runtime environment and call

38: // geo location service to obtain extended data

39: exports.getIP=function(request,ibmdb,connString) {

40: return function(req, res) {

41: var ip = req.headers['x-client-ip'] || req.connection.remoteAddress;

42: var ipurl = 'http://freegeoip.net/json/' + ip;

43: console.log("yes, called");

44: // fetch ip info

45: request.get( {

46: url: ipurl,

47: json : true},

48: function(error, response, body) {

49: var ipinfo;

50: if (!error) {

51: ipinfo=body;

52: // insert IP info into DB2

53: exports.insertIP(ibmdb,connString,ipinfo);

54: // finish by rendering the HTML page

55: res.render('index',{ ipinfo : ipinfo});

56: }

57: });

58: }

59: };

60:

61:

62:

63: // Very simple country/region-based reporting done using GROUP BY.

64: exports.listCountries = function(ibmdb,connString) {

65: return function(req, res) {

66:

67: ibmdb.open(connString, function(err, conn) {

68: if (err ) {

69: res.send("error occurred " + err.message);

70: }

71: else {

72: conn.query("SELECT country, region, count(region) as rcount FROM ip.visitors group by country,region order by 3 desc", function(err, tables, moreResultSets) {

73:

74:

75: if ( !err ) {

76: res.render('tablelist', {

77: "tablelist" : tables

78:

79: });

80:

81:

82: } else {

83: res.send("error occurred " + err.message);

84: }

85:

86: /*

87: Close the connection to the database

88: param 1: The callback function to execute on completion of close function.

89: */

90: conn.close(function(){

91: console.log("Connection Closed");

92: });

93: });

94: }

95: } );

96:

97: }

98: }